Fine-tuning MV2 using a GPU is not hard – especially if using the tensorflow Object Detection API in a docker container. It turns out that deploying a quantize-aware model to a Coral Edge TPU is also not hard.

Doing the same thing for MyriadX devices is somewhat harder. There doesn’t appear to be a good way to take your quantized model and convert it to MyriadX. If you try to convert your quantized model to openvino you get recondite errors; if you turn up logging on the tool you see the errors happen when the tool hits “FakeQuantization nodes.”

Thankfully, for OpenVINO you can just retrain without quantization and things work fine. As such it seems like you end up training twice – which is less than ideal.

Right now my pipeline is as follows:

- Annotate using LabelImg – installable via brew (OS X)

- Train (using my NVidia RTX 2070 Super). For this i use Tensorflow 1.15.2 and a specific hash of the tensorflow models dir. Using a different version of 1.x might work – but using 2.x definitely did not, in my case.

- For Coral

- Export to a frozen graph

- Export the frozen graph to tflite

- Compile the tflite bin to the edgetpu

- Write custom c++ code to interface with the corale edgetpu libraries to run the net on images (in my case my code can grab live from a camera or from a file)

- For DepthAI/MyriadX

- Re-train without the quantized-aware portion of the pipeline config

- Convert the frozen graph to openvino’s intermediate representation (IR)

- Compile the IR to a MyriadX blob

- Push the blob to a local depthai checkout

Let’s go through each section stage of the pipeline:

Annotation

For my custom data set I gathered a sample dataset consisting of video clips. I then exploded those clips using ffmpeg:

ffmpeg -i $1 -r 1/1 $1_%04d.jpgI then installed LabelImg and annotated all my classes. As I got more proficient in LabelImg I could do about one image every second or two – fast enough, but certainly not as fast as Tesla’s auto labeler!

Note that for my macbook, I found the following worked for getting labelimg:

conda create --name deeplearning

conda activate deeplearning

pip install pyqt5==5.15.2 lxml

pip install labelImg

labelImg

Training

In my case I found that mobilenetv2 meets all my needs. Specifically it is fast, fairly accurate, and it runs on all my devices (both in software, and on the Coral and OAKDLite)

There are gobs of tutorials on training mobilenetv2 in general. For example, you can grab one of the great tensorflow docker images. By no means should you assume that once you have the image all is done – unless you are literally just running someone elses tutorial. The moment you throw in your own dataset you’re going to have to do a number of things. And most likely they will fail. Over and over. So script them.

But before we get to that let’s talk versions. I found that the models produced by tensorflow 2 didnt work well with any of my HW accelerators. Version 1.15.2 worked well, however, so i went with that. I even tried other versions of tensorflow 1x and had issues. I would like to dive into the cause of the issues – but have not done so yet.

See my github repo for an example Dockerfile. Note that for my GPU (an RTX 2070 Super) I had to work around memory growth issues by patching the Tensor Flow Object Detection model_main.py. I also modified my pipeline batch sizes (to 6, from the default 24). Without these fixes the training would explode mysteriously with an unhelpful error message. If only those blasted bitcoin miners hadn’t made GPUs so expensive perhaps I could upgrade to another GPU with more memory!

It is also worth noting that the version of mobilenet i used, and the pipeline config, were different for the Coral and Myraid devices, e.g.:

- Coral: ssd_mobilenet_v2_quantized_300x300_coco_2019_01_03

- Myriad: ssd_mobilenet_v2_coco_2018_03_29

Update: it didn’t seem to matter which version of mobilenet i used – both work.

I found that I could get near a loss of 0.3 using my dataset and pipeline configuration after about 100,000 iterations. On my GPU this took about 3 hours, which was not bad at all.

Deploying to Coral

Coral requires quantized training exported to tflite. Once exported to tflite you must compile it for the edgetpu.

On the coral it is simple enough to change from the default mobilenet image to your custom image – literally the filenames change. Must point it at your new labelMap (so it can correctly map the classnames) and image.

Deploying to MyriadX

Myriad was a lot more difficult. To deploy a trained model one must first convert it to the OpenVINO IR formart, as follows:

source /opt/intel/openvino_2021/bin/setupvars.sh && \

python /opt/intel/openvino_2021/deployment_tools/model_optimizer/mo.py \

--input_model learn_tesla/frozen_graph/frozen_inference_graph.pb \

--tensorflow_use_custom_operations_config /opt/intel/openvino_2021/deployment_tools/model_optimizer/extensions/front/tf/ssd_v2_support.json \

--tensorflow_object_detection_api_pipeline_config source_model_ckpt/pipeline.config \

--reverse_input_channels \

--output_dir learn_tesla/openvino_output/ \

--data_type FP16

Then the IR can be converted to blob format by running the compile_tool command. I had significant problems with the compile_tool mainly because it didnt like something about my trained output. In the end I found the cause was that openvino simply doesn’t like the nodes being put into the training graph due to quantization. Removing that from the pipeline solved this. However this cuts the other way for Coral – it ONLY accepts quantized-aware training (caveat: in some cases you could post-process quant however Coral explicitly states this doesn’t work in all cases).

Once the blob file was ready i put the blob, and a json snippet, in the resources/nn/<custom> folder inside my depthai checkout. I was able to see full framerate (at 300×170) or about 10fps at full resolution (from a file). Not bad!

Runtime performance

On the google Coral I am able to create an extremely low-latency pipeline – from capture to inference in only a few milliseconds. Inference itself completes in about 20ms with my fine-tuned model – so I am able to operate at full speed.

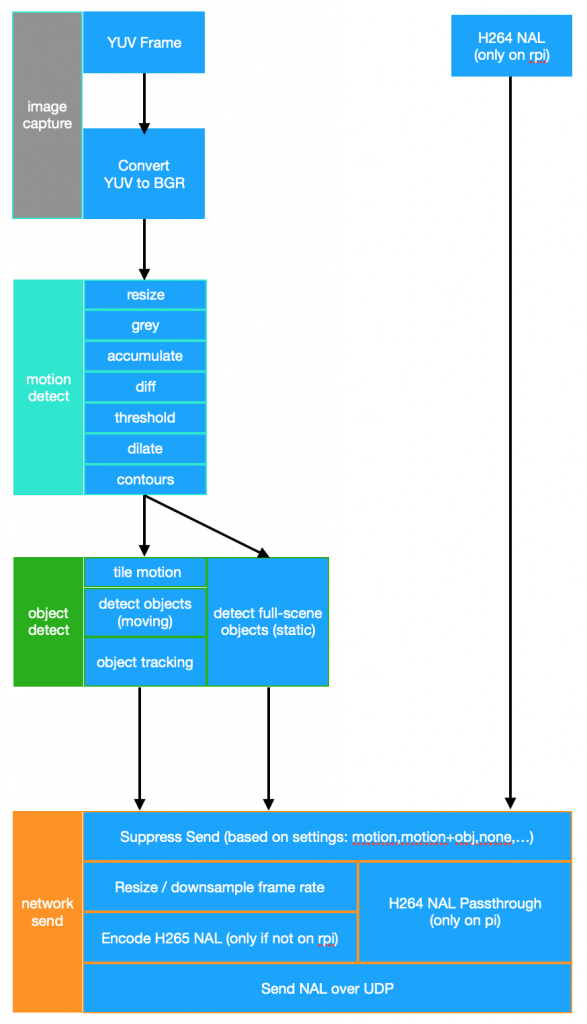

I have not fully characterized the MyriadX based pipeline latency. Running from a file I was able to keep up at 30 fps. My Coral pipeline involved me pulling the video frames using my own C/C++ code down – including the YUV->BGR colorspace conversion. Since the MyriadX is able to grab and process on its device, it does have the potential to be low latency – but this has yet to be tested.